How to read political polls

Surveys aren't perfect, but we rely on them anyways—let's learn what to look for.

With an estimated 255 million plus adults nationwide, it is impossible to survey every likely voter. Instead, political polls survey a sample of people to generate larger predictions. Thus, because surveys can never capture the full population, they are inherently flawed.

But that does not mean they’re useless! Yes, even despite 2016’s unexpected presidential results. The key to reading polls is to know what to look for.

This post provides a brief overview of how to accurately interpret survey data. First, we’ll cover the basics—how do you interpret polls (and wtf is a margin of error)? Then, I outline four factors you should use to evaluate the legitimacy of survey and poll results. At the bottom are a few links to high-quality survey data for you to do some digging on your own.

Bottom line: Polls can be useful, but they’re never perfect. Knowing what to look for, and how to make your own judgements about their quality is an important first step.

Words to know:

Population: The universe of subjects that the researcher wants to describe. In the case of political polls, this would be all registered voters, for example.

Sample: A select number of cases, or observations, drawn from a population. Ie, the people surveyed.

Parameter: The actual (unobserved) value in the population.

Sample Statistic: estimate of the population parameter from the sample (or survey) data.

Political polls survey a sample of voters to create a sample statistic to estimate the larger parameters of political opinions in the entire population.

First, how to read a political poll:

The main thing to look at when evaluating a poll is the margin of error. This is a statistical number that essentially tells you how precise the poll results are, or how confident the surveyor is in their estimation. Think of it as a range.

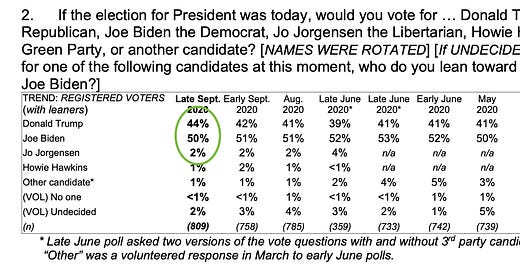

For example, let’s use the most recent national poll from Monmouth College (one of the top political surveys in the country). This poll has 908 respondents and a 3.5% margin of error. Let’s look what that means for question two: Presidential nominee preference.

On the surface, this looks like a 6% lead for Joe Biden. However, taking the 3.5% margin of error into consideration, Donald Trump could actually be leading 47.5% — 46.5%! The results listed are the mean (or average) of the survey results. The margin of error means that results could confidently fall within 3.5% +/- from that average. Below illustrates how the margin of error operates:

Polls will clearly state their margin of error up front. Generally, the smaller the margin of error, the higher quality the poll. (Hint: If you can’t find the margin of error relatively easily, it’s probably not a good poll.)

But what goes into margin of error, and how can we validate the results of surveys on our own?

Four factors to consider when evaluating the legitimacy of poll results:

There are many factors that go into the margin of error and the legitimacy of a surveys, but for simplicity’s sake, here are four things you should consider when evaluating results:

Size of the sample.

Simply put, the more people that are surveyed, the more accurate the findings are. Think of the population as the denominator, and the number of people sampled as the numerator.

The more people that are sampled, the more opinions you are capturing. Likewise, limiting the scope of the survey to a smaller population—such as a single state or congressional district—will increase accuracy.

There are two main numbers that usually serve as the denominator for political polls: the voting age population (VAP) and registered voters (or “likely voters”). These two populations are just what they sound like. VAP is anyone 18 and up. Registered voters or likely voters are people who are registered to vote, or have expressed their intention to vote in the survey.

When you’re reading a survey, look for the '“number of respondents” (often denoted by n). The higher the number of respondents, the stronger the poll.

How representative the sample is of the population.

But not only should samples be as large as possible, but they need to accurately reflect the demographics of a population—this can include things like race, gender, and age, but also geography or political orientation. Ie, this is the goal:

In practice though, samples rarely mirror the population. For example, older Americans are often over-represented, while 18-25 year olds are notoriously hard to capture. This leaves some guess work in establishing the parameters of an under-represented group.

There are two ways surveys address this: weighting the sample after the survey is completed (also called “probability sampling”) or purposive sampling, which proactively searches for certain respondents to make sure demographics are represented.

Both approaches rely on ratios. Let’s use Black voters in Alabama as an example: Black voters make up an estimated 27% of the VAP in the state.

A weighted approach performs the survey first, and then corrects any imbalance by emphasizing (or deemphasizing) certain respondents through multiplication. If there are too few Black respondents, their responses will be multiplied by a larger number to equate to 27% of the sample. If the sample contains over 27% Black respondents, their responses will multiplied by a smaller number.

Purposive sampling on the other hand, looks for enough Black respondents from the get-go to ensure 27% of their sample is Black.

Weighted samples are more popular (ie, they are cheaper). But the more tweaking that has to be done to the survey to match the population, the more variance there is in the results (and a larger margin of error).

It’s also good to remember that the data that establishes the demographic ratios in the first place are surveys themselves! Albeit, large ones: Pew Research and the U.S. Census are often used to establish those demographics. Yet another reminder of the fragile nature of surveys.

Survey questions.

How do you ask a question that avoids invoking bias or a partisan response? Do you use words that are familiar to voters, yet informal?

For example, the two questions below are are asking about the same type of policy—reforming city budgets:

Do you support defunding the police?

Do you support diverting public funds to public mental health support?

But the word choice and phrasing will easily elicit different responses, even from the same person! The science of survey questioning is an entire field of political science (and one I am admittedly not an expert in). But, next time you evaluate poll results, take a look at the actual questions that were asked.

FiveThirtyEight ranks and categorizes surveys to correct for any implicit partisan bias that’s caused by sample respondents or questions asked, but chances are you can also recognize bias on your own.

Method of collection.

Last, how is the survey administered, and how does that affect who is being reached and the answers they provide? Political surveys have historically relied on phone calls, but with the decline in phone landlines, surveyors have gotten more creative. Internet surveys and phone surveys with cellphone numbers are modern approaches. In-person interviews are considered gold star, but expensive.

While a large and representative sample size will counteract most methods of collection, it’s important to think about how the method of collection impacts the results. For example, if a survey only relies on land-line phone response—you’re likely missing a younger demographic that doesn’t use landlines. Same for online-only surveys and elderly respondents.

To figure out these factors, look for the survey’s “methodology” or “methodological approach”. It should be easy to find. (And again, a hint: if you can’t easily find information on the methodology and sources, it’s probably not high-quality data.)

So where should I look for good survey data?

Check out the Voter Study Group’s Nationscape survey, put together by Democracy Fund, a nonpartisan research organization. Their up-to-date, purposive sampling surveys are massive (318,697 respondents and counting) and performed weekly. They ask questions on current events, including elections. You can download the data in PDF form here.

For political polling: Monmouth University, Marist College polling, and Washington Post/ ABC News joint poll are top-notch sources. See a full list here.

FiveThirtyEight, a popular source for polling, actually forms their own predictions based on state and nation polls, combined with other factors like the economy and regional unemployment (so, they aren’t surveys but rather predictions based on surveys). You can check out their 2020 forecast here and read more about their extensive methodology here. They also consider how the electoral college could play out—particularly helpful as the gap between popular vote and electoral votes continues to expand (but more on that next time…).

Found this helpful? Share with a friend!

Have more questions? Leave a comment!